Why ECS Task Definitions Kept Changing on Every Apply

Every Terraform apply was creating new ECS task definition revisions even when nothing had actually changed. Our test environment had accumulated 17,000 task definitions. ECS task definitions are tracked by AWS Config, so this was quietly contributing to increased costs.

In AWS ECS, a task definition is a blueprint that describes how a container should run — what image to use, environment variables, resource limits, health checks, and so on. Every time Terraform sees a difference between what it expects and what AWS has, it creates a new revision. When that happens on every apply with no real change, something is off.

Finding the root cause

The way to debug this is to compare what Terraform has in state against what the AWS API actually returns. Pull the task definition from Terraform state and diff it against the output of aws ecs describe-task-definition. The differences tell you exactly what Terraform thinks it needs to change.

Two causes came up.

Environment variable ordering. AWS reorders environment variables alphabetically when storing a task definition. Terraform was building the environment variable list using merge(), a built-in function that combines maps but does not guarantee key order. On each plan, the order could differ from what AWS had stored, so Terraform saw a change that wasn’t really there.

Missing properties in the container templates. Some optional fields were absent from the JSON templates we used to define containers. AWS fills these in with default values and includes them in the API response. When Terraform compared its template against the API response, it saw those extra fields as additions it needed to remove — which triggered another replacement.

Fields like healthCheck.interval, healthCheck.retries, healthCheck.timeout, systemControls, mountPoints, and portMappings all fell into this category.

The fix

For environment variable ordering, the fix is to sort the keys explicitly before building the list so Terraform and AWS always agree on the order.

locals {

merged_env_vars = merge(local.php_environment_json, var.extra_ecs_env_vars)

php_environment_ecs = [

for k in sort(keys(local.merged_env_vars)) : {

name = k

value = local.merged_env_vars[k]

}

]

}

For the missing properties, the fix is to add them explicitly to the container JSON templates to match what AWS returns. Once both sides agree, Terraform stops seeing phantom changes.

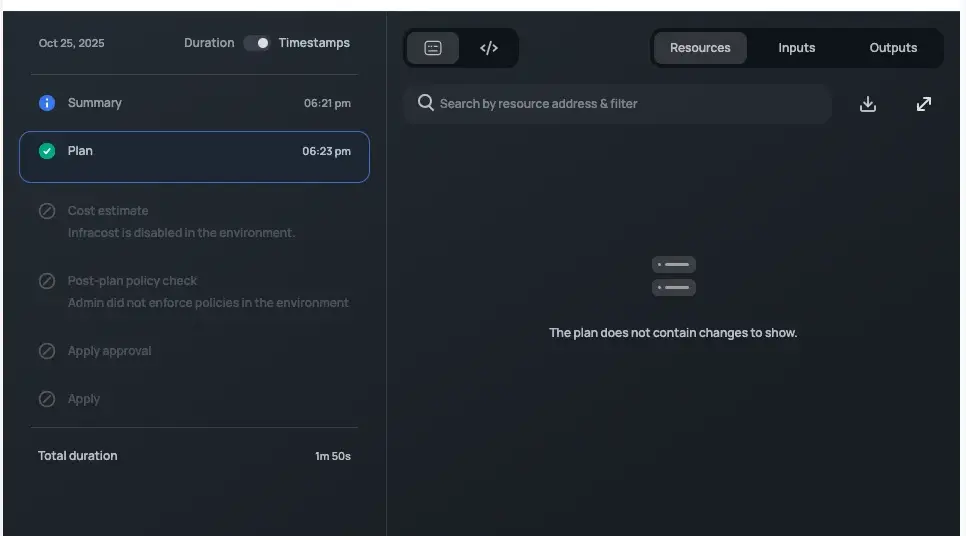

After both fixes, running the plan twice in a row showed no changes on the second run.